Welcome to 100 Days Of Kubernetes!

100 Days of Kubernetes is the challenge in which we aim to learn something new related to Kubernetes each day across 100 Days!!!

You Can Learn Anything

A lot of times it is just about finding the right resources and the right learning path.

What can you find in this book

This book provides a list of resources from across the cloud native space to learn about and master Kubernetes. Whether you are just getting started with Kubernetes or you are already using Kubernetes, I am sure that you will find a way to use the resources or contribute :)

Just a note of caution

The notes in this book depend on the community to improve and become accurate. Everything detailed so far is someone's personal understanding at each point in time learning about the respective topics.

Help us improve the notes.

100 Days Of Kubernetes

So what is this challenge all about? The idea (and credit) goes to two existing communities :

The idea is that you publicly commit to learning something new. This way, the community can support and motivate you. Every day that you are doing the challenge, just post a tweet highlighting what you learned with the #100DaysOfKubernetes hashtag.

Additionally, creating your own content based on what you are learning can be highly valuable to the community. For example, once you wrote a blog post on Kubernetes ReplicaSets or similar, you could add it to this book.

The goal is to make this a community-driven project.

Where to get started

Fork the example repository We suggest you to fork the journey repository. Every day that you work on the challenge, you can make changes to the repository to detail what you have been up to. The progress will then be tracked on your GitHub.

Tweet about your progress Share your learnings and progress with the #100DaysOfKubernetes on Twitter.

Join the community We have a channel in this Discord channel -- come say hi, ask questions, and contribute!

Contribute

You can find more information on contributions in the README of this GitHub repository.

Structure of the book

The book is divided into several higher-level topics. Each topic has several sub-topics that are individual pages or chapters.

Those chapters have a similar structure:

Title

The title of the page

100Days Resources

This section highlights a list of community resources specific to the topics that is introduced. Additionally, this is where you can include your own content, videos and blog articles, from your 100DaysOfKubernetes challenge.

Learning Resources

A list of related learning resources. Different to '100Days Resources', these do not have to be specific to 100DaysOfKubernetes.

Example Notes

This section provides an introduction to the topics. The goal is to advance each topics over time. When you are first time learning about a topic, it is usually best to take your own notes but sometimes having a starting point and examples is helpful.

List of Example Notes by the Community

About the contributors

If you have contributed to this book, please add yourself to the list :)

Anais Urlichs

What is Kubernetes and why do we want it?

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

Kubernetes builds on Borg and the lessons learned by Google for operating Borg for over 15 years.

Borg was used by Google internally to manage its systems.

For more information here is the full paper https://research.google/pubs/pub43438/

What is Kubernetes?

Kubernetes is an open-source container Orchestration Framework.

At its root, it manages containers — to manage applications that are made of of containers- physical machines, virtual machines, hybrid environments

According to the kubernetes.io website, Kubernetes is:

"an open-source system for automating deployment, scaling, and management of containerized applications".

What problems does it solve

- Following the trend from Monolithic to Microservices — traditionally, an application would be a Monolithic application — which requires the hardware to scale with the application. In comparison, Kubernetes deploys a large number of small web servers.

- Containers are the perfect host for small self-contained applications

- Applications comprised of 100s of containers — managing those with scripts can be really difficult and even impossible

- Kubernetes helps us with the following: connecting containers across multiple hosts, scaling them, deploying applications without downtime, and service discovery among several other aspects

The benefits of splitting up your application from Monolithic into Microservices is that they are easier to maintain. For instance:

Instead of a large Apache web server with many httpd daemons responding to page requests, there would be many nginx servers, each responding.

Additionally, it allows us to separate matters of concerns within our application i.e. decoupling the architecture based on responsibilies.

Additionally, Kubernetes is an essential part of

- Continuous Integration

- Continuous Delivery

Orchestration tools such as Kubernetes offer:

- High availability

- Scalability: Applications have higher performance e.g. load time

- Disaster Recovery: The architecture has to have a way to back-up the data and restore the state of the application at any point in time

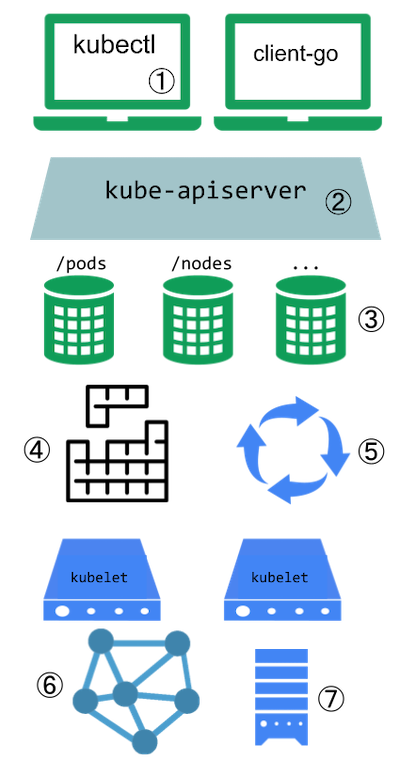

How does the architecture actually look like:

- You have a master node: Runs several Kubernetes processes that are necessary to run the container's processes — e.g. an API server ⇒ the entry point to the Kubernetes cluster (UI, API, CLI); then it needs to have a Controller Manager ⇒ keeps track of what is happening e.g. detects when a pod dies ⇒ detects state changes; and lastly, it contains a Scheduler ⇒ this ensures the Pod placement (more on pods later); etcd database ⇒ key-value storage that holds the state of the Kubernetes cluster at any point in time; and the last thing that is needed is the Virtual Network that spans across all the nodes in the cluster

- And worker nodes: contains Containers of different applications; here is where the actual work is happening

Note that worker nodes are usually much bigger because they are running the containers. The master node will only run a selection of processes. Note now master node is named as control plane.

Each node will have multiple pods with containers running on them. 3 processes have to be present on all nodes

- Container Runtime e.g. Docker

- Kubelet; which is a process of Kubernetes itself that interacts with both, the container runtime and the node — it is responsible for taking our configuration and starting a pod inside the node

Usually a Kubernetes cluster has several nodes running in parallel.

- nodes communicate with services between each other. Thus, the third process that has to be installed on every node is Kube Proxy that forwards requests between nodes and pods— the communication works in a performant way with low overhead. Requests are forwarded to the closest pod.

Kubernetes Concepts — Pods

Pod ⇒ the smallest unit that you, as a Kubernetes user will configure

- Pods are a wrapper of a container; on each worker node, we will have multiple containers

- Usually, you would have one application, one container per pod

- Each Pod has its own IP address — thus, each pod is its own self-containing server. This allows pods to communicate with each other — through their internal IP addresses

Note that we don't actually create containers inside the Kubernetes cluster but we work with the pods that are an abstraction layer over the containers. Pods manage the containers without our intervention

In case a pod dies, it can be configured to recreate (as per restart policy) and it will get a new IP address.

A service is used as an alternative for a pods IP address. Resulting, there is a service that sits in front of each pod that abstracts away the dynamic IP address. Resulting, the lifecycle of a pod and the IP address are not tied to each other-

If a pod behind the service dies, it gets recreated.

A service has two main functions:

- Providing an IP address to the pod(s)

- It is a Loadbalancer (what the hack is that 😆 — more on this later)

How do we create those components

- All configuration goes through the master node — UI, API, CLI, all talk to the API server within the master node — they send the configuration request to the API server

- The configuration is usually in YAML format ⇒ a blue print for creating pods. The Kubernetes agents convert the YAML to JSON prior to persistence to the database.

- The configuration of the requirements are in a declarative format. This will allow it to compare the desired state to the actual state (more on this later)

Developer Workflow:

- Create Docker images based on your application

- Use Docker and Kubernetes

- A CI pipeline to build, test, and verify Docker images

- "You must be able to perform rolling updates and rollbacks, and eventually tear down the resource when no longer needed." — the course

This requires flexible and easy to use network storage.

Kubernetes Architecture

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

We can divide the responsibilities within a Kubernetes cluster between a main node and worker nodes. Note that in small clusters we may have one node that takes the responsibilities of both.

Main Node

Where does the orchestration from Kubernetes come in? These are some characteristics that make up Kubernetes as a container orchestration system:

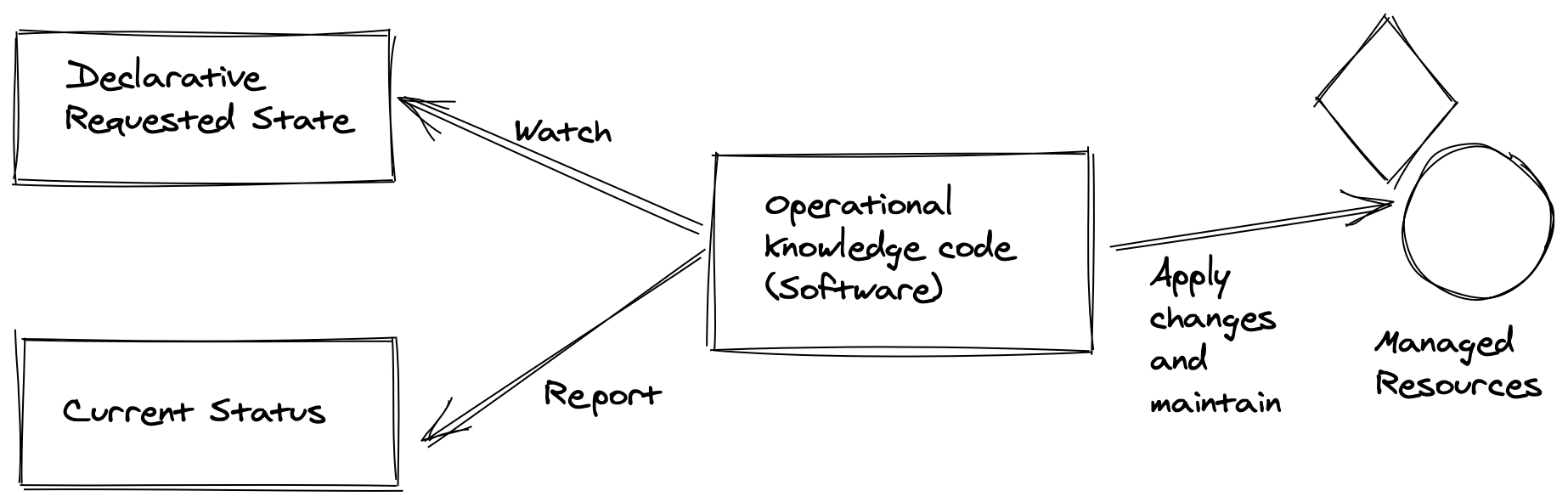

- Managed by several operators and controllers — will look at operators and controllers later on. Operators make use of custom resources to manage an application and their components.

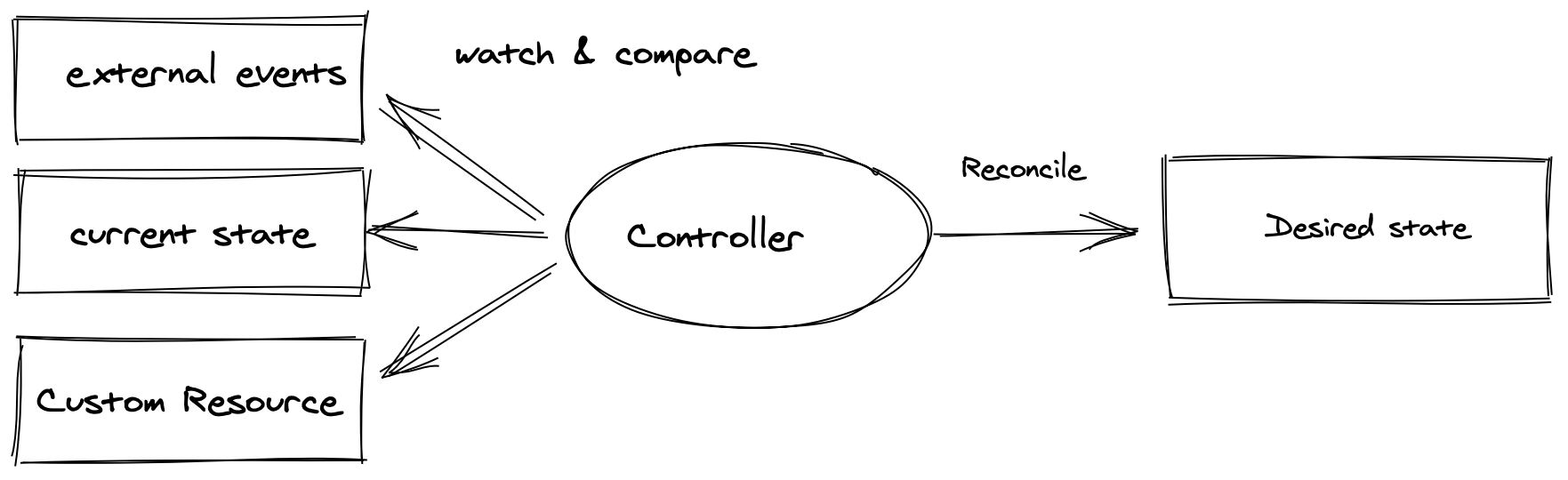

- "Each controller interrogates the kube-apiserver for a particular object state, modifying the object until the declared state matches the current state." In short, controllers are used to ensure a process is happening in the desired way.

- "The ReplicaSet is a controller which deploys and restarts containers, Docker by default, until the requested number of containers is running." In short, its purpose is to ensure a specific number of pods are running.

Note that those concepts are details in further sections of the book.

There are several other API objects which can be used to deploy pods. A DaemonSet will ensure that a single pod is deployed on every node. These are often used for logging and metrics. A StatefulSet can be used to deploy pods in a particular order, such that following pods are only deployed if previous pods report a ready status.

API objects can be used to know

-

What containerized applications are running (and on which nodes)

-

The resources available to those applications

-

The policies around how those applications behave, such as restart policies, upgrades, and fault-tolerance

-

kube-apiserver

- Provides the front-end to the cluster's shared state through which all components interact

- Is central to the operation of the Kubernetes cluster.

- Handles internal and external traffic

- The only agent that connects to the etcd database

- Acts as the master process for the entire cluster

- Provides the out-wards facing state for the cluster's state

- Each API call goes through three steps: authentication, authorization, and several admission controllers.

-

kube-scheduler

- The Scheduler sees a request for running a container and will run the container in the best suited node

- When a new pod has to be deployed, the kube-scheduler determines through an algorithm to which node the pod should be deployed

- If the pod fails to be deployed, the kube-scheduler will try again based on the resources available across nodes

- A user could also determine which node the pod should be deployed to —this can be done through a custom scheduler

- Nodes that meet scheduling requirements are called feasible nodes.

- You can find more details about the scheduler on GitHub.

-

etcd Database

- The state of the cluster, networking, and other persistent information is kept in an etcd database

- etcd is a consistent and highly-available key value store used as Kubernetes' backing store for all cluster data

- Note that this database does not change; previous entries are not modified and new values are appended at the end.

- Once an entry can be deleted, it will be labelled for future removal by a compaction process. It works with curl and other HTTP libraries and provides reliable watch queries.

- Requests to update the database are all sent through the kube api-server; each request has its own version number which allows the etcd to distinguish between requests. If two requests are sent simultaneously, the second request would then be flagged as invalid with a 409 error and the etcd will only update as per instructed by the first request.

- Note that it has to be specifically configured

-

Other Agents

- The kube-controller-manager is a core control loop daemon which interacts with the kube-apiserver to determine the state of the cluster. If the state does not match, the manager will contact the necessary controller to match the desired state. It is also responsible to interact with third-party cluster management and reporting.

- The cluster has several controllers in use, such as endpoints, namespace, and replication. The full list has expanded as Kubernetes has matured. Remaining in beta as of v1.16, the cloud-controller-manager interacts with agents outside of the cloud. It handles tasks once handled by kube-controller-manager. This allows faster changes without altering the core Kubernetes control process. Each kubelet must use the --cloud-provider-external settings passed to the binary.

-

There are several add-ons which have become essential to a typical production cluster, such as DNS services. Others are third-party solutions where Kubernetes has not yet developed a local component, such as cluster-level logging and resource monitoring.

"Each node in the cluster runs two processes: a kubelet and a kube-proxy."

Kubelet: handles requests to the containers, manages resources and looks after the local nodes

The Kube-proxy creates and manages networking rules — to expose container on the network

-

kubelet

Each node has a container runtime e.g. the Docker engine installed. The kubelet is used to interact with the Docker Engine and to ensure that the containers that need to run are actually running.

Additionally, it does a lot of the work for the worker nodes; such as accepting API calls for the Pods specifications that are either provided in JSON or YAML.

Once the specifications are received, it will take care of configuring the nodes until the specifications have been met

Should a Pod require access to storage, Secrets or ConfigMaps, the kubelet will ensure access or creation. It also sends back status to the kube-apiserver for eventual persistence.

-

kube-proxy

The kube-proxy is responsible for managing the network connectivity to containers. To do that is has iptables.

"iptables is a user-space utility program that allows a system administrator to configure the IP packet filter rules of the Linux kernel firewall, implemented as different Netfilter modules."

Additional options are the use of namespaces to monitor services and endpoints, or ipvs to replace the use of iptables

To easily manage thousands of Pods across hundreds of nodes can be a difficult task to manage. To make management easier, we can use labels, arbitrary strings which become part of the object metadata. These can then be used when checking or changing the state of objects without having to know individual names or UIDs. Nodes can have taints to discourage Pod assignments, unless the Pod has a toleration in its metadata.

Multi-tenancy

When multiple-users are able to access the same cluster

Additional security measures can be taken through either of the following:

-

Namespaces: Namespaces can be used to "divide the cluster"; additional permissions can be set on each namespace; note that two objects cannot have the same name in the same namespace

-

Context: A combination of user, cluster name and namespace; this allows you to restrict the cluster between permissions and restrictions. This information is referenced by ~/.kube/config

Can be checked with

kubectl config view -

Resource Limits: Provide a way to limit the resources that are provided for a specific pod

-

Pod Security Policies: "A policy to limit the ability of pods to elevate permissions or modify the node upon which they are scheduled. This wide-ranging limitation may prevent a pod from operating properly. The use of PSPs may be replaced by Open Policy Agent in the future."

-

Network Policies: The ability to have an inside-the-cluster firewall. Ingress and Egress traffic can be limited according to namespaces and labels as well as typical network traffic characteristics.

Kubernetes Pods

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

- https://www.vmware.com/topics/glossary/content/kubernetes-pods

- https://cloud.google.com/kubernetes-engine/docs/concepts/pod

- https://kubernetes.io/docs/concepts/workloads/pods/

Example Notes

Overview

Pods are the smallest unit in a Kubernetes cluster; which encompass one or more application ⇒ it represents processes running on a cluster. Pods are used to manage your application instance.

Here is a quick summary of what a Pod is and its responsibilities:

- In our nodes, and within our Kubernetes cluster, the smallest unit that we can work with are pods.

- Containers are part of a larger object, which is the pod. We can have one or multiple containers within a pod.

- Each container within a pod share an IP address, storage and namespace — each container usually has a distinct role inside the pod.

- Note that pods usually operate on a higher level than containers; they are more of an abstraction of the processes within a container than the container itself.

- A pod can also run multiple containers; all containers are started in parallel ⇒ this makes it difficult to know which process started before another.

- Usually, one pod is used per container process; reasons to run two containers within a pod might be logging purposes.

- initContainers can be used to ensure some containers are ready before others in a pod. To support a single process running in a container, you may need logging, a proxy, or special adapter. These tasks are often handled by other containers in the same Pod.

- Usually each pod has one IP address.

- You may find the term sidecar for a container dedicated to performing a helper task, like handling logs and responding to requests, as the primary application container may have this ability.

Running multiple containers in one pod

An example for running multiple containers within a pod would be an app server pod that contains three separate containers: the app server itself, a monitoring adapter, and a logging adapter. Resulting, all containers combines will provide one service.

In this case, the logging and monitoring container should be shared across all projects within the organisation.

Replica sets

Each pod is supposed to run a single instance of an application. If you want to scale your application horizontally, you can create multiple instance of that pod.

It is usually not recommended to create pods manually but instead use multiple instances of the same application; these are then identical pods, called replicas.

Such a set of replicated Pods are created and managed by a controller, such as a Deployment.

Connection

All the pods in a cluster are connected. Pods can communicate through their unique IP address. If there are more containers within one pod, they can communicate over localhost.

Pods are not forever

Pods are not "forever"; instead, they easily die in case of machine failure or have to be terminated for machine maintenance. When a pod fails, Kubernetes automatically (unless specified otherwise) spins it up again.

Additionally, a controller can be used to ensure that the pod is "automatically" healing. In this case, the controlled will monitor the stat of the pod; in case the desired state does not fit the actual state; it will ensure that the actual state is moved back towards the desired state.

It is considered good practice to have one process per pod; this allows for easier analysis and debugging.

Each pod has:

- a unique IP address (which allows them to communicate with each other)

- persistent storage volumes (as required) (more on this later on another day)

- configuration information that determine how a container should run

Pod lifecycle

(copied from Google)

Each Pod has a PodStatus API object, which is represented by a Pod's status field. Pods publish their phase to the status: phase field. The phase of a Pod is a high-level summary of the Pod in its current state.

When you run

kubectl get pod Link

to inspect a Pod running on your cluster, a Pod can be in one of the following

possible phases:

- Pending: Pod has been created and accepted by the cluster, but one or more of its containers are not yet running. This phase includes time spent being scheduled on a node and downloading images.

- Running: Pod has been bound to a node, and all of the containers have been created. At least one container is running, is in the process of starting, or is restarting.

- Succeeded: All containers in the Pod have terminated successfully. Terminated Pods do not restart.

- Failed: All containers in the Pod have terminated, and at least one container has terminated in failure. A container "fails" if it exits with a non-zero status.

- Unknown: The state of the Pod cannot be determined.

Limits

Pods by themselves do not have a memory or CPU limit. However, you can set limits to control the amount of CPU or memory your Pod can use on a node. A limit is the maximum amount of CPU or memory that Kubernetes guarantees to a Pod.

Termination

Once the process of the pod is completed, it will terminate. Alternatively, you can also delete a pod.

Setup your first Kubernetes Cluster

100Days Resources

Learning Resources

- What is Kubernetes: https://youtu.be/VnvRFRk_51k

- Kubernetes architecture explained: https://youtu.be/umXEmn3cMWY

Example Notes

Today, I will get started with the book: The DevOps 2.3 Toolkit; and will work my way through the book.

Chapter 1 provides an introduction to Kubernetes; I will use it to optimise the notes from the previous day.

Chapter 2 provides a walkthrough on how to set-up a local Kubernetes cluster using minikube or microk8s. Alternatively, kind could also be used to create a local Kubernetes cluster or if you have Docker Desktop you could use directly the single node cluster included.

Prerequisites

- Have Docker installed (if not go ahead and do it): https://docs.docker.com/

- Install kubectl

Here is how to install kubectl

If you have the Homebrew package manager installed, you can use that:

brew install kubectl

On Linux, the commands are going to be:

curl -LO [https://storage.googleapis.com/kubernetes-release/release/$](https://storage.googleapis.com/kubernetes-release/release/$)(curl -s https:/\

/storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubec\

tl

chmod +x ./kubectl

sudo mv ./kubectl /usr/local/bin/kubectl

To make sure you have kubectl installed, you can run

kubectl version --output=yaml

Install local cluster

To install minikube, you require a virtualisation technology such as VirtualBox. If you are on windows, you might want to use hyperv instead. Minikube provides a single node instance that you can use in combination with kubectl.

It supports DNS, Dashboards, CNI, NodePorts, Config Maps, etc. It also supports multiple hypervisors, such as Virtualbox, kvm, etc.

In my case I am going to be using microk8s since I had several issues getting started with minikube. However, please don't let this put you off. Please look for yourself into each tool and decide which one you like the best.

Microk8s provides a lightweight Kubernetes installation on your local machine. Overall, it is much easier to install on Linux using snap since it does not require any virtualization tools.

sudo snap install microk8s --classic

However, also the Windows and Mac installation are quite straightforward so have a look at those on their website.

Make sure that kubectl has access directly to your cluster.

If you have multiple clusters configured, you can switch between them using your kubectl commands

To show the different clusters available:

kubectl config get-contexts

to switch to a different cluster:

kubectl config use-context <name of the context>

Once we are connected to the right cluster, we can ask kubectl to show us our nodes

kubectl get nodes

Or you could see the current pods that are running on your cluster — if it is a new cluster, you likely don't have any pods running.

kubectl get pods

In the case of minikube and microk8s, we have only one node

Running Pods

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

- How Pods and the Pod Lifecycle work in Kubernetes

- Pods and Containers - Kubernetes Networking | Container Communication inside the Pod

Example Notes

Practical Example

Fork the following repository: https://github.com/vfarcic/k8s-specs

git clone https://github.com/vfarcic/k8s-specs.git

cd k8s-specs

Create a mongo DB database

kubectl run db --image mongo \

--generator "run-pod/v1"

If you want to confirm that the pod was created do: kubectl get pods

Note that if you do not see any output right away that is ok; the mongo image is really big so it might take a while to get the pod up and running.

Confirm that the image is running in the cluster

docker container ls -f ancestor=mongo

To delete the pod run

kubectl delete pod db

Delete the pod above since it was not the best way to run the pod.

- Pods should be created in a declarative format. However, in this case, we created it in an imperative way — BAD!

To look at the pod definition:

cat pod/db.yml

apiVersion: v1 // means the version 1 of the Kubernetes pod API; API version and kind has to be provided -- it is mandatory

kind: Pod

metadata: // the metadata provides information on the pod, it does not specifiy how the pod behaves

name: db

labels:

type: db

vendor: MongoLabs // I assume, who has created the image

spec:

containers:

- name: db

image: mongo:3.3 // image name and tag

command: ["mongod"]

args: ["--rest", "--httpinterface"] // arguments, defined in an array

In the case of controllers, the information provided in the metadata has a practical purpose. However, in this case, it merely provides descriptive information.

All arguments that can be used in pods are defined in https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.17/#pod-v1-core

With the following command, we can create a pod that is defined in the pod.yml file

kubectl create -f pod/db.yml

to view the pods (in json format)

kubectl get pods -o json

We can see that the pod went through several stages (stages detailed in the video on pods)

In the case of microk8s, both master and worker nodes run on the same machine.

To verify that the database is running, we can go ahead an run

kubectl exec -it db sh // this will start a terminal inside the running container

echo 'db.stats()'

exit

Once we do not need a pod anymore, we should delete it

kubectl delete -f pod/db.yml

- Kubernetes will first try to stop a pod gracefully; it will have 30s to shut down.

- After the "grace period" a kill signal is sent

Additional notes

- Pods cannot be split across nodes

- Storage within a pod (volumes) can be accessed by all the containers within a pod

Run multiple containers with in a pod

Most pods should be made of a single container; multiple containers within one pod is not common nor necessarily desirable

Look at

cat pod/go-demo-2.yml

of the closed repository (the one cloned at the beginning of these notes)

The yml defines the use of two containers within one pod

kubectl create -f pod/go-demo-2.yml

kubectl get -f pod/go-demo-2.yml

To only retrieve the names of the containers running in the pod

kubectl get -f pod/go-demo-2.yml \

-o jsonpath="{.spec.containers[*].name}"

Specify the name of the container for which we want to have the logs

kubectl logs go-demo-2 -c db

- livenessProbes are used to check whether a container should be running

Have a look at

cat pod/go-demo-2-health.yml

within the cloned repository.

Create the pod

kubectl create \

-f pod/go-demo-2-health.yml

wait a minute and look at the output

kubectl describe \

-f pod/go-demo-2-health.yml

Kubernetes ReplicaSet

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

ReplicaSets

It is usually not recommended to create pods manually but instead use multiple instances of the same application; these are then identical pods, called replicas. You can specify the desired number of replicas within the ReplicaSet.

A ReplicaSet ensures that a certain number of pods are running at any point in time. If there are more pods running than the number specified by the ReplicaSet, the ReplicaSet will kill the pods.

Similarly, if any pod dies and the total number of pods is fewer than the defined number of pods, the ReplicaSet will spin up more pods.

Each pod is supposed to run a single instance of an application. If you want to scale your application horizontally, you can create multiple instances of that pod.

The pod ReplicaSet is used for scaling pods in your Kubernetes cluster.

Such a set of replicated Pods are created and managed by a controller, such as a Deployment.

As long as the primary conditions are met: enough CPU and memory is available in the cluster, the ReplicaSet is self-healing; it provides fault tolerance and high availibility.

It's only purpose is to ensure that the specified number of replicas of a service is running.

All pods are managed through Controllers and Services. They know about the pods that they have to manage through the in-YAML defined Labels within the pods and the selectors within the Controllers/Services. Remember the metadata field from one of the previous days — in the case of ReplicaSets, these labels are used again.

Some Practice

Clone the following repository: https://github.com/vfarcic/k8s-specs and enter into the root folder

cd k8s-specs

Looking at the following example

cat rs/go-demo-2.yml

- The selector is used to specify which pods should be included in the replicaset

- ReplicaSets and Pods are decoupled

- If the pods that match the replicaset, it does not have to do anything

- Similar to how the ReplicaSet would scale pods to match the definition provided in the yaml, it will also terminate pods if there are too many

- the spec.template.spec defines the pod

Next, create the pods

kubectl create -f rs/go-demo-2.yml

We can see further details of your running pods through the kubectl describe command

kubectl describe -f rs/go-demo-2.yml

To list all the pods, and to compare the labels specified in the pods match the ReplicaSet

kubectl get pods --show-labels

You can call the number of replicasets by running

kubectl get replicasets

ReplicaSets are named using the same naming convention as used for pods.

Difference between ReplicaSet and Replication Controller

They both serve the same purpose — the Replication Controller is being deprecated.

Operating ReplicaSets

You can delete a ReplicaSet without deleting the pods that have been created by the replicaset

kubectl delete -f rs/go-demo-2.yml \

--cascade=false

And then the ReplicaSet can be created again

kubectl create -f rs/go-demo-2.yml \

--save-config

the —save-config flag ensures that our configurations are saved, which allows us to do more specific tasks later on.

Kubernetes Deployments

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

This little exercise will be based on the following application: https://github.com/anais-codefresh/react-article-display

Then we will create a deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: react-application

spec:

replicas: 2

selector:

matchLabels:

run: react-application

template:

metadata:

labels:

run: react-application

spec:

containers:

- name: react-application

image: anaisurlichs/react-article-display:master

ports:

- containerPort: 80

imagePullPolicy: Always

More information on Kubernetes deployments

- A deployment is a Kubernetes object that makes it possible to manage multiple, identical pods

- Using deployments, it is possible to automate the process of creating, modifying and deleting pods — it basically manages the lifecycle of your application

- Whenever a new object is created, Kubernetes will ensure that this object exist

- If you try to set-up pods manually, it can lead to human error. On the other hand, using deployments is a better way to prevent human errors.

- The difference between a deployment and a service is that a deployment ensures that a set of pods keeps running by creating pods and replacing broken prods with the resource defined in the template. In comparison, a service is used to allow a network to access the running pods.

- Deploy a replica set or pod

- Update pods and replica sets

- Rollback to previous deployment versions

- Scale a deployment

- Pause or continue a deployment

Create deployment

kubectl create -f deployment.yaml

Access more information on the deployment

kubectl describe deployment <deployment name>

Create the service yml

apiVersion: v1

kind: Service

metadata:

name: react-application

labels:

run: react-application

spec:

type: NodePort

ports:

- port: 8080

targetPort: 80

protocol: TCP

name: http

selector:

run: react-application

Creating the service with kubectl expose

kubectl expose deployment/my-nginx

This will create a service that is highly similar to our in yaml defined service. However, if we want to create the service based on our yaml instead, we can run:

kubectl create -f my-pod-service.yml

How is the Service and the Deployment linked?

The targetPort in the service yaml links to the container port in the deployment. Thus, both have to be, for example, 80.

We can then create the deployment and service based on the yaml, when you look for "kubectl get service", you will see the created service including the Cluster-IP. Take that cluster IP and the port that you have defined in the service e.g. 10.152.183.79:8080 basically

kubectl get service

Alternatively, for more information of the service

kubectl get svc <service name> -o yaml

-o yaml: the data should be displayed in yaml format

Delete the resources by

kubectl delete service react-application

kubectl delete deployment react-application

// in this case, your pods are still running, so you would have to remove them individually

Note: replace react-application with the name of your service.

Namespaces

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

In some cases, you want to divide your resources, provide different access rights to those resources and more. This is largely driven by the fear that something could happen to your precious production resources.

However, with every new cluster, the management complexity will scale — the more clusters you have, the more you have to manage — basically, the resource overhead of many small clusters is higher than of one big one. Think about this in terms of houses, if you have one big house, you have to take care of a lot but having several small houses, you have x the number of everything + they will be affected by different conditions.

Practical

Let's get moving, you only learn by doing.

Clone this repository https://github.com/vfarcic/k8s-specs

cd k8s-specs

and then, we will use this application

cat ns/go-demo-2.yml

Then we do a nasty work-around to specify the image tag used in the pod

IMG=vfarcic/go-demo-2

TAG=1.0

cat ns/go-demo-2.yml \

| sed -e \

"s@image: $IMG@image: $IMG:$TAG@g" \

| kubectl create -f -

When the -f argument is followed with a dash (-), kubectl uses standard input (stdin) instead of a file.

To confirm that the deployment was successful

kubectl rollout status \

2 deploy go-demo-2-api

Which will get us the following output

hello, release 1.0!

Almost every service are Kubernetes Objects.

kubectl get all

Gives us a full list of all the resources that we currently have up and running.

The system-level objects within our cluster are usually not visible, only the objects that we created.

Within the same namespace, we cannot have twice the same object with exactly the same name. However, we can have the same object in two different namespaces.

Additionally, you could specify within a cluster permissions, quotas, policies, and more — will look at those sometimes later within the challenge.

We can list all of our namespaces through

kubectl get ns

Create a new namespaces

kubectl create namespace testing

Note that you could also use 'ns' for 'namespace'

Kubernetes puts all the resources needed to execute Kubernetes commands into the kube-system namespace

kubectl --namespace kube-system get all

Now that we have a namespace testing, we can use it for new deployments — however, specifying the namespace with each command is annoying. What we can do instead is

kubectl config set-context testing \

--namespace testing \

--cluster docker-desktop \

--user docker-desktop

In this case, you will have to change the command according to your cluster. The created context uses the same cluster as before — just a different namespace.

You can view the config with the following command

kubectl config view

Once we have a new context, we can switch to that one

kubectl config use-context testing

Once done, all of our commands will be automatically executed in the testing namespace.

Now we can deploy the same resource as before but specify a different tag.

TAG=2.0

DOM=go-demo-2.com

cat ns/go-demo-2.yml \

| sed -e \

"s@image: $IMG@image: $IMG:$TAG@g" \

| sed -e \

"s@host: $DOM@host: $TAG\.$DOM@g" \

| kubectl create -f -

to confirm that the rollout has finished

kubectl rollout status \

deployment go-demo-2-api

Now we can send requests to the different namespaces

curl -H "Host: 2.0.go-demo-2.com" \

2 "http://$(minikube ip)/demo/hello"

Deleting resources

It can be really annoying to have to delete all objects one by one. What we can do instead is to delete all resources within a namespace all at once

kubectl delete ns testing

The real magic of namespaces is when we combine those with authorization logic, which we are going to be looking at in later videos.

ConfigMaps

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

- https://matthewpalmer.net/kubernetes-app-developer/articles/ultimate-configmap-guide-kubernetes.html

Example Notes

ConfigMaps make it possible to keep configurations separately from our application images by injecting configurations into your container. The content/injection might be configuration files or variables.

It is a type of Volume.

ConfigMaps=Mount a source to a container

It is a directory or file of configuration settings.

Environment variables can be used to configure new applications. They are great unless our application is too complex.

If the application configuration is based on a file, it is best to make the file part of our Docker image.

Additionally, you want to use ConfigMap with caution. If you do not have any variations between configurations of your app, you do not need a ConfigMap. ConfigMaps let you easily fall into the trap of making specific configuration — which makes it harder to move the application and to automate its set-up. Resulting, if you do use ConfigMaps, you would likely have one for each environment.

So what could you store within a ConfigMap

A ConfigMap stores configuration settings for your code. Store connection strings, public credentials, hostnames, and URLs in your ConfigMap.

So make sure to not store any sensitive information within ConfigMap.

We first create a ConfigMap with

kubectl create cm my-config \

--from-file=cm/prometheus-conf.yml

Taking a look into that resource

**kubectl describe cm my-config**

ConfigMap is another volume that, like other volumes, need to mount

cat cm/alpine.yml

The volume mount section is the same, no matter the type of volume that we want to mount.

We can create a pod and make sure it is running

kubectl create -f cm/alpine.yml

kubectl get pods

And then have a look inside the pod

kubectl exec -it alpine -- \

ls /etc/config

You will then see a single file that is correlated to the file that we stored in the ConfigMap

To make sure the content of both files is indeed the same, you can use the following command

kubectl exec -it alpine -- \

cat /etc/config/prometheus-conf.yml

The —from-file argument in the command at the beginning can be used with files as well as directories.

In case we want to create a ConfigMap with a directory

kubectl create cm my-config \

--from-file=cm

and have a look inside

kubectl describe cm my-config

The create a pod that mounts to the ConfigMap

kubectl create -f cm/alpine.yml

kubectl exec -it alpine -- \

ls /etc/config

Make sure to delete all the files within your cluster afterwards

kubectl delete -f cm/alpine.yml

kubectl delete cm my-config

Furthermore, like every other Kubernetes resource, you can define ConfigMaps through Kubernetes YAML files. This actually (probably the easiest way) — write the ConfigMap in YAML and mount it as a Volume

We can show one of our existing ConfigMaps in YAML

kubectl get cm my-config -o yaml

Additionally we can take a look at this file within our repository that has both a Deployment object and a ConfigMap

cat cm/prometheus.yml

kind: ConfigMap

apiVersion: v1

metadata:

name: example-configmap

data:

# Configuration values can be set as key-value properties

database: mongodb

database_uri: mongodb://localhost:27017

# Or set as complete file contents (even JSON!)

keys: |

image.public.key=771

rsa.public.key=42

And then create the ConfigMap like any other resource

kubectl apply -f config-map.yaml

kind: Pod

apiVersion: v1

metadata:

name: pod-using-configmap

spec:

# Add the ConfigMap as a volume to the Pod

volumes:

# `name` here must match the name

# specified in the volume mount

- name: example-configmap-volume

# Populate the volume with config map data

configMap:

# `name` here must match the name

# specified in the ConfigMap's YAML

name: example-configmap

containers:

- name: container-configmap

image: nginx:1.7.9

# Mount the volume that contains the configuration data

# into your container filesystem

volumeMounts:

# `name` here must match the name

# from the volumes section of this pod

- name: example-configmap-volume

mountPath: /etc/config

Kubernetes Service

100Days Resources

Learning Resources

- Official Documentation

- https://kubernetes.io/docs/reference/kubectl/cheatsheet/

- https://katacoda.com/courses/kubernetes

- Guides and interactive tutorial within the Kubernetes docs https://kubernetes.io/docs/tutorials/kubernetes-basics/expose/expose-intro/

- Kubernetes by example https://kubernetesbyexample.com/ created by OpenShift

Example Notes

Pods are formed, destroyed and never repaired. You would not repair an existing, running pod but rather deploy a new, healthy one.

Controllers, along with the Scheduler inside your Kubernetes cluster are making sure that pods are behaving correctly, they are monitoring the pods.

So far, only containers within the same pod can talk to each other through localhost. This prevents us from scaling our application. Thus we want to enable communication between pods. This is done with Kubernetes Services.

Kubernetes Services provide addresses through which associated Pods can be accessed.

A service is usually created on top of an existing deployment.

Follow along

Clone the following repository: https://github.com/vfarcic/k8s-specs

And prepare our minikube, microk8s or whatever you are using as your local cluster 🙂

cd k8s-specs

git pull

minikube start --vm-driver=virtualbox

kubectl config current-context

For this exercise, we are going to create the following ReplicaSet, similar to what we have done in the previous video. The definition will look as follows:

cat svc/go-demo-2-rs.yml

Now create the ReplicaSet:

kubectl create -f svc/go-demo-2-rs.yml

// get the state of it

kubectl get -f svc/go-demo-2-rs.yml

Before continuing with the next exercises, make sure that both replicas are ready

With the kubectl expose command, we can tell Kubernetes that we want to expose a resource as service in our cluster

kubectl expose rs go-demo-2 \

--name=go-demo-2-svc \

--target-port=28017 \ // this is the port that the MongoDB interface is listening to

--type=NodePort

We can have by default three different types of Services

ClusterIP

ClusterIP is used by default. It exposes the service only within the cluster. By default you want to be using ClusterIP since that prevents any external communication and makes your cluster more secure.

NodePort

Allows the outside world to access the node IP

and LoadBalancer

The LoadBalancer is only useful when it is combined with the LoadBalancer of your cloud provider.

The process when creating a new Service is something like this:

- First, we tell our API server within the master node in our cluster it should create a new service — in our case, this is done through kubectl commands.

- Within our cluster, inside the master node, we have an endpoint controller. This controller will watch our API server to see whether we want to create a new Service. Once it knows that we want to create a new service, it will create an endpoint object.

- The kube-proxy watches the cluster for services and enpoints that it can use to configure the access to our cluster. It will then make a new entry in its iptable that takes note of the new information.

- The Kube-DNS realises that there is a new service and will add the db’s record to the dns server (skydns).

Taking a look at our newly created service:

kubectl describe svc go-demo-2-svc

- All the pods in the cluster can access the targetPort

- The NodePort automatically creates the clusterIP

- Note that if you have multiple ports defined within a service, you have to name those ports

Let’s see whether the Service indeed works.

PORT=$(kubectl get svc go-demo-2-svc

-o jsonpath="{.spec.ports[0].nodePort}")

IP=$(minikube ip)

open "http://$IP:$PORT"

Creating Services in a Declarative format

cat svc/go-demo-2-svc.yml

- The service is of type NodePort - making it available within the cluster

- TCP is used as default protocol

- The selector is used by the service to know which pods should receive requests (this works the same way as the selector within the ReplicaSet)

With the following command, we create the service and then get the sevrice

kubectl create -f svc/go-demo-2-svc.yml

kubectl get -f svc/go-demo-2-svc.yml

We can look at our endpoint through

kubectl get ep go-demo-2 -o yaml

- The subset responds to two pods, each pod has its own IP address

- Requests are distributed between these two nodes

Make sure to delete the Service and ReplicaSet at the end

kubectl delete -f svc/go-demo-2-svc.yml

kubectl delete -f svc/go-demo-2-rs.yml

Ingress

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

Ingress is responsible for managing the external access to our cluster. Whereby it manages

- forwarding rules based on paths and domains

- SSl termination

- and several other features.

The API provided by Ingress allows us to replace an external proxy with a loadbalancer.

Things we want to resolve using Ingress

- Not having to use a fixed port — if we have to manage multiple clusters, we would have a hard time managing all those ports

- We need standard HTTPS(443) or HTTP (80) ports through a predefined path

When we open an application, the request to the application is first received by the service and LoadBalancer, which is the responsible for forwarding the request to either of the pods it is responsible for.

To make our application more secure, we need a place to store the application's HTTPS certificate and forwarding. Once this is implemented, we have a mechanism that accepts requests on specific ports and forwards them to our Kubernetes Service.

The Ingress Controller can be used for this.

Unlike other Kubernetes Controllers, it is not part of our cluster by default but we have to install it separately.

If you are using minikube, you can check the available addons through

minikube addons list

And enable ingress (in case it is not enabled)

minikube addons enable ingress

If you are on microk8s, you can enable ingress through

microk8s enable ingress

You can check whether it is running through — if the pods are running for nginx-ingress, they will be listed

kubectl get pods --all-namespaces | grep nginx-ingress

If you receive an empty output, you might have to wait a little bit longer for Ingress to start.

Here is the YAML definition of our Ingress resource

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: react-application

annotations:

kubernetes.io/ingress.class: "nginx"

ingress.kubernetes.io/ssl-redirect: "false"

nginx.ingress.kubernetes.io/ssl-redirect: "false"

spec:

rules:

- http:

paths:

- path: /demo

pathType: ImplementationSpecific

backend:

service:

name: react-application

port:

number: 8080

- The annotation section is used to provide additional information to the Ingress controller.

- The path is the path after the

You can find a list of annotations and the controllers that support them on this page: https://github.com/kubernetes/ingress-nginx/blob/master/docs/user-guide/nginx-configuration/annotations.md

We have to set the ssl redirect to false since we do not have an ssl certificate.

You can create the resource through

kubectl create \

-f <name of your file>

If your application's service is set to NodePort, you will want to change it back into ClusterIP since there is no need anymore for NodePort.

What happens when we create a new Ingress resource?

- kubectl will send a request to the API Server of our cluster requesting the creation of a new Ingress resource

- The ingress controller is consistently checking the cluster to see if there is a new ingress resource

- Once it sees that there is a new ingress resource, it will configure its loadbalancer

Ingress is a kind of service that runs on all nodes within your cluster. As long as requests match any of the rules defined within Ingress, Ingress will forward the request to the respective service.

To view the ingress running inside your cluster, use

kubectl get ing

Note that it might not work properly on microk8s.

Service Mesh

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

The goal is:

- Higher Portability: Deploy it wherever

- Higher Agility: Update whenever

- Lower Operational Management: Invest low cognitive

- Lower Security Risk

How do Services find each other?

- Answering this question allows us to break down the value of Service Mesh — different Services have to find each other.

- If one service fails, the traffic has to be routed to another service so that requests don't fail

Service-discovery can become the biggest bottleneck.

Open platform, independent service mesh.

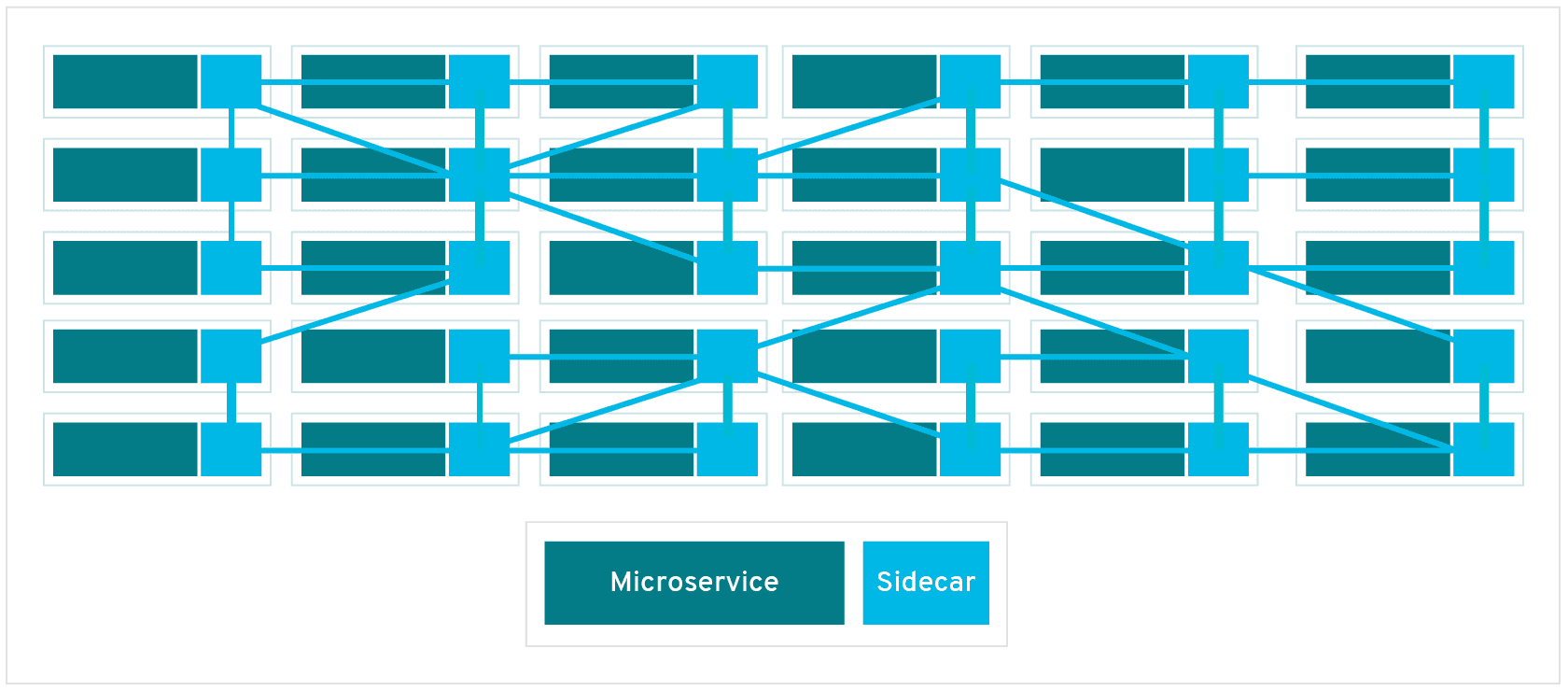

In its simplest form a service mesh is a network of your microservices, managing the traffic between services. This allows it to manage the different interactions between your microservices.

A lot of the responsibilities that a service mesh has could be managed on an application basis. However, with the service mesh takes that logic out of the application specific services and manages those on an infrastructure basis.

Why do you need Service Mesh?

Istio is a popular solution for managing communication between microservices.

When we move from monolithic to microservice application, we run into several issues that we did not have before. It will need the following setup

- Each microservice has its own business logic — all service endpoints must be configured

- Ensure Security standards with firewall rules set-up — every service inside the cluster can talk to every other service if we do not have any additional security inside — for more important applications this is not secure enough. This may result in a lot of complicated configuration.

To better manage the application configuration, everything but the business logic could be packed into its own Sidecar Proxy, which would then be responsible to

- Handle the networking logic

- Act as a Proxy

- Take care of third-party applications

- Allow cluster operators to configure everything easily

- Enable developers to focus on the actual business logic

A service mesh will have a control plane that will inject this business logic automatically into every service. Once done, the microservices can talk to each other through proxies.

Core feature of service mesh: Traffic Splitting:

- When you spin up a new service in response to a high number of requests, you only want to forward about 10% of the traffic to the new Service to make sure that it really works before distributing the traffic between all services. This may also be referred to as Canary Deployment

"In a service mesh, requests are routed between microservices through proxies in their own infrastructure layer. For this reason, individual proxies that make up a service mesh are sometimes called “sidecars,” since they run alongside each service, rather than within them. Taken together, these “sidecar” proxies—decoupled from each service—form a mesh network."

"A sidecar proxy sits alongside a microservice and routes requests to other proxies. Together, these sidecars form a mesh network."

Service Mesh is just a paradigm and Istio is one of the implementations

Istio allows Service A and Service B to communicate to each other. Once your microservices scale, you have more services, the service mesh becomes more complicated — it becomes more complicated to manage the connection between different services. That's where Istio comes in.

It runs on

- Kubernetes

- Nomad

- Console

I will focus on Kubernetes.

Features

- Load Balancing: Receive some assurance of load handling — enabled some level of abstraction that enables services to have their own IP addresses.

- Fine Grain Control: to make sure to have rules, fail-overs, fault connection

- Access Control: Ensure that the policies are correct and enforceable

- Visibility: Logging and graphing

- Security: It manages your TSL certificates

Additionally, Service Mesh makes it easier to discover problems within your microservice architecture that would be impossible to discover without.

Components — Connect to the Control Plane API within Kubernetes — note that this is the logic of Istio up to version 1.5. The latest versions only deal with Istiod.

- Pilot: Has A/B testing, has the intelligence how everything works, the driver of Istio

- Cit: Allows Service A and Service B to talk to each other

How do we configure Istio?

- You do not have to modify any Kubernetes Deployment and Service YAML files

- Istio is configured separately from application configuration

- Since Istio is implemented through Kubernetes Custom Resource Definitions (CRD), it can be easily extended with other Kubernetes-based plug-ins

- It can be used like any other Kubernetes object

The Istio-Ingress Gateway is an entry-point to our Kubernetes cluster. It runs as a pod in our cluster and acts as a LoadBalancer.

Service Mesh Interface

With different projects and companies creating their own Service Mesh, the need for standards and specifications arise. One of those standards is provided by the Service Mesh Interface (SMI). In its most basic form, SMI provides a list of Service Mesh APIs. Separately SMI is currently a CNCF sandbox project.

SMI provides a standard interface for Service Mesh on Kubernetes

- Provides a basic set of features for the most common use cases

- Flexible to support new use case over time

Website with more information

SMI covers the following

- Traffic policy – apply policies like identity and transport encryption across services

- Traffic telemetry – capture key metrics like error rate and latency between services

- Traffic management – shift traffic between different services

Other Service Mesh Examples

- Gloo Mesh: Enterprise version of Istio Service Mesh but also has a Gloo Mesh open source version.

- Linkerd: Its main advantage is that it is lighter than Istio itself. Note that Linkerd was origially developed by Buoyant. Linkerd specifically, is run through an open governance model.

- Nginx service mesh: Focused on the data plane and security policies; platform agnostic; traffic orchestration and management

Kubernetes Volumes

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

- https://kubernetes.io/docs/tasks/configure-pod-container/configure-volume-storage/

- https://codeburst.io/kubernetes-storage-by-example-part-1-27f44ae8fb8b

- https://youtu.be/0swOh5C3OVM

Example Notes

We cannot store data within our containers — if our pod crashes and restarts another container based on that container image, all of our state will be lost. Kubernetes does not give you data-persistence out of the box.

Volumes are references to files and directories made accessible to containers that form a pod. So, they basically keep track of the state of your application and if one pod dies the next pod will have access to the Volume and thus, the previously recorded state.

There are over 25 different Volume types within Kubernetes — some of which are specific to hosting providers e.g. AWS

The difference between volumes is the way that files and directories are created.

Additionally, Volumes can also be used to access other Kubernetes resources such as to access the Docker socket.

The problem is that the storage has to be available across all nodes. When a pod fails and is restarted, it might be started on a different node.

Overall, Kubernetes Volumes have to be highly error-resistant — and even survive a crash of the entire cluster.

Volumes and Persistent Volumes are created like other Kubernetes resources, through YAML files.

Additionally, we can differentiate between remote and local volumes — each volume type has its own use case.

Local volumes are tied to a specific node and do not survive cluster disasters. Thus, you want to use remote volumes whenever possible.

apiVersion: v1

kind: Pod

metadata:

name: empty-dir

spec:

containers:

- name: busybox-a

command: ['tail', '-f', '/dev/null']

image: busybox

volumeMounts:

- name: cache

mountPath: /cache

- name: busybox-b

command: ['tail', '-f', '/dev/null']

image: busybox

volumeMounts:

- name: cache

mountPath: /cache

volumes:

- name: cache

emptyDir: {}

Create the resource:

kubectl apply -f empty-dir

Write to the file:

kubectl exec empty-dir --container busybox-a -- sh -c "echo \"Hello World\" > /cache/hello.txt"

Read what is within the file

kubectl exec empty-dir --container busybox-b -- cat /cache/hello.txt

However, to ensure that the data will be saved beyond the creation and deletion of pods, we need Persistent volumes. Ephemeral volume types only have the lifetime of a pod — thus, they are not of much use if the pod crashes.

A persistent volume will have to take the same storage as the physical storage.

Storage in Kubernetes is an external plug-in to our cluster. This way, you can also have multiple different storage resources.

The storage resources is defined within the PersistentVolume YAML

A hostPath volume mounts a file or directory from the host node’s filesystem into your Pod. This is not something that most Pods will need, but it offers a powerful escape hatch for some applications.

— Kubernetes — Volumes

apiVersion: v1

kind: Pod

metadata:

name: host-path

spec:

containers:

- name: busybox

command: ['tail', '-f', '/dev/null']

image: busybox

volumeMounts:

- name: data

mountPath: /data

volumes:

- name: data

hostPath:

path: /data

Create the volume

kubectl apply -f empty-dir

Read from the volume

kubectl exec host-path -- cat /data/hello.txt

KinD

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

Setting up Kind on Windows and Cluster Comparison

These are the docs that I used to set-up all of my resources on Windows:

Setting up the Ubuntu in Windows

- https://docs.microsoft.com/en-us/windows/wsl/install-win10#step-4---download-the-linux-kernel-update-package

- https://github.com/microsoft/WSL/issues/4766

Use WSL in Code

- https://docs.docker.com/docker-for-windows/wsl/

- https://marketplace.visualstudio.com/items?itemName=ms-vscode-remote.remote-wsl

Kubectl installation

Create Kind cluster

If you want to run microk8s on WSL, you have to get a snap workaround described here

Running a local cluster

Especially when you are just getting started, you might want to spin up a local cluster on your machine. This will allow you to run tests, play around with the resources and more without having to worry much about messing something up :) — If you watched any of my previous videos, you will have already a good understand of how much I enjoy trying out different things — setting things up just to try something out — gain a better understanding — and then to delete everything in the next moment.

Also, you might have seen that I already have two videos on microk8s —mainly since the minikube set-up on my Ubuntu did not properly work. Now that I am on Windows, I might actually have more options. So let's take a look at those and see how they compare.

Kubernetes in Docker

When you install Docker for Desktop (or however you call it) you can enable Kubernetes:

The set-up will take a few seconds but then you have access to a local cluster both from the normal Windows terminal (I am still new to Windows, so excuse any terminology that is off) or through the WSL.

kubectl config get-contexts

and you will see a display of the different clusters that you have set-up. This will allow you to switch to a different cluster:

kubectl config use-context <context name>

Below are several other options highlighted.

minikube

minikube is probably the best known of the three; maybe because it is the oldest. When you are using minikube, it will spin up a VM that runs a single Kubernetes node. To do so it needs hypervisor. Now, if you have never interacted with much virtualisation technology, you might think of a hypervisor as something like this:

I assure you, it is not. So what is a Hypervisor then? A Hypervisor is basically a form of software, firmware, or hardware that is used to set-up virtual environments on your machine.

Running minikube, you can spin up multiple nodes as well, each will be running their own VM (Virtual Machine).

For those of you, who are really into Dashboards, minikube provides a Dashboard, too! Personally, I am not too much of a fan but that is your choice. If you would like some sort of Dashboard, I highly recommend you k9s. Here is my introductory video if you are curious.

This is what the minikube Dashboard looks like — just your usual UI 😊

Now how easy is the installation of minikube? Yes, I mentioned that I had some problems installing minikube on Ubuntu and thus, went with microk8s. At that time, I did not know about kind yet. Going back to the original question, if you are using straight up Windows it is quite easy to install, if you are using Linux in Windows however, it might be a bit different — tbh I am a really impatient person, so don't ask me.

What is kind?

Kind is quite different to minikube, instead of running the nodes in VMs, it will run nodes as Docker containers. Because of that, it is supposed to start-up faster — I am not sure how to test this, they are both spinning up the cluster in an instance and that is good enough for me.

However, note that kind requires more space on your machine to run than etiher microk8s or minikube. In fact, microk8s is actually the smallest of the three.

Like detailed in this article, you can

- With 'kind load docker-image my-app:latest' the image is available for use in your cluster

Which is an additional feature.

If you decide to use kind, you will get the most out of it if you are fairly comfortable to use YAML syntax since that will allow you to define different cluster types.

The documentation that I used to install it

microk8s

Microk8s is in particular useful if you want to run a cluster on small devices; it is better tested in ubuntu than the other tools. Resulting, you can install it with snap super quickly! In this case, it will basically run the cluster separate from the rest of the stuff on your computer.

It also allows for multi-node clusters, however, I did not try that yet, so I don't know how well that actually works.

Also note that if you are using microk8s on MacOS or Windows, you will need a hypevisor of sorts. Running it on Ubuntu, you do not.

My video

Direct comparison

This article by Max Brenner offers a really nice comparison between the different tools with a comparison table https://brennerm.github.io/posts/minikube-vs-kind-vs-k3s.html

K3s and K3sup

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

Example Notes

When you are choosing a Kubernetes distribution, the most obvious one is going to be K8s, which is used by major cloud vendors and many more. However, if you just want to play around on your local machine with Kubernetes, test some tools or learn about Kubernetes resources, you would likely go with something like minikube, microk8s or kind.

Now we just highlighted two use cases for different types of Kubernetes clusters. What about use cases where you want to run Kubernetes on really small devices, such as raspberry pis? What about IoT devices? Generally, devices where you want to run containers effectively without consuming too much resources.

In those cases, you could go with K3s.

What is K3s?

In short, k3s is half the size in terms of memory footprint than "normal Kubernetes". The origin of k3s is Rio, which was developed by Rancher. However, they then decided to branch it out of Rio into its own tool — which became K3s. It was more of a figure it out by doing it.

The important aspect of k3s is that it was oriented around production right from the beginning.

You want to be able to run Kubernetes in highly resource constraint environments — which is not always possible with pure Kubernetes.

K3s is currently a CNCF sandbox project — the development is led by Rancher, which provides Kubernetes as a service (?)

- Instead of Docker, it runs Containerd — Note that Kubernetes itself is also moving to Containerd as its container runtime. This does not mean that you will not be able to run Docker containers. If I just scared you, please watch this video to clarify.

Designed based on the following goals:

- Lightweight: Show work on small resource environment

- Compatibility: You should be able to use most of the tools you can use with "normal k8s"

- Ethos: Everything you need to use k3s is built right in

Btw: K3s is described as the second most popular Kubernetes distribution (Read in a Medium post, please don't quote me on this)

How does k3s differ from "normal" Kubernetes?

Do you have questions? We have answers!

Now I recorded last week an episode with Alex Ellis, who built k3sup, which can be used to deploy k3s. Here are some of the questions that I had before the live recording that are answered within the recording itself:

- Let's hear a bit about the background of k3sup — how did it come about?

- How are both pronounced?

- How would you recommend learning about k3s — let's assume you are complete new, where do you start?

- Walking through the k3s architecture https://k3s.io/

- What is the difference between k8s and k3s

- When would I prefer to use k8s over k3s

- What can I NOT do with k3s? Or what would I NOT want to do?

- Do I need a VM to run k3s? It is mentioned in some blog posts — let's assume I do not have a raspberry pi — I have a VM, you can set them up quite easily; why would I run K3s on a VM?

- So we keep discussing that this is great for a Kubernetes homelab or IoT devices — is that not a bit of an overkills to use Kubernetes with it?

- Is the single node k3s similar to microk8s — having one instance that is both worker node

Use cases for k3s:

- Single node clusters

- Edge

- IoT

- CI

- Development Environments and Test Environments

- Experiments, useful for academia

- ARM

- Embedding K8s

- Situations where a PhD in K8s clusterology is infeasible

Install k3s

There are three ways for installing k3s*

- The quick way shown below directly with k3s

- Supposedly easier way with k3s up

- The long way that is detailed over several docs pages https://rancher.com/docs/k3s/latest/en/installation/

*Actually there are several more ways to install k3s like highlighted in this video:

This is the installation script:

curl -sfL https://get.k3s.io | sh -

Note that this might take up to 30 sec. Once done, you should be able to run

k3s kubectl get node

What it does

The K3s service will be configured to automatically restart after node reboots or if the process crashes or is killed Additional utilities will be installed, including kubectl, crictl, ctr, k3s-killall.sh, and k3s-uninstall.sh A kubeconfig file will be written to /etc/rancher/k3s/k3s.yaml and the kubectl installed by K3s will automatically use it

Once this is done, you have to set-up the worker nodes that are used by k3s

curl -sfL https://get.k3s.io | K3S_URL=https://myserver:6443 K3S_TOKEN=mynodetoken sh -

Once installed, access the cluster

Leverage the KUBECONFIG environment variable:

export KUBECONFIG=/etc/rancher/k3s/k3s.yaml

kubectl get pods --all-namespaces

helm ls --all-namespaces

If you do not set KUBECONFIG as an environment variable,

Installing with k3sup

The following is taken from the k3sup README file

$ curl -sLS https://get.k3sup.dev | sh

$ sudo install k3sup /usr/local/bin/

$ k3sup --help

There is a lot to unpack... WHAT DOES THIS SENTENCE MEAN?

This tool uses ssh to install k3s to a remote Linux host. You can also use it to join existing Linux hosts into a k3s cluster as agents.

If you want to get an A to Z overview, watch the following video

If you are still wondering about what k3s, have a look at this video

How is Kubernetes modified?

- This is not a Kubernetes fork

- Added rootless support

- Dropped all third-party storage drivers, completely CSI is supported and preferred

Following this tutorial; note that there are many others that you could use — have a look at their documentation:

https://rancher.com/blog/2020/k3s-high-availability

Kustomize

100Days Resources

- Video by Anais Urlichs

- Add your blog posts, videos etc. related to the topic here!

Learning Resources

- Their webiste has lots of amazing videos

Example Notes

Configuration Management for Kubernetes

— "A template free way to customize application configuration that simplifies the use of off-the-shelf applications"

When I create a YAML, the manifests go into the API server of the main node; the cluster then aims to create the resources within the cluster to match the desired state defined in the YAML

Different to what we have seen before in the videos, YAML can get super complex! Additionally, there are several aspects of the state of our deployment that we want to frequently change. Including:

- Namespaces, Labels, Container Registry, Tags and more

Then, we have resources and processes that we want to change a bit less frequently, such as

- Management Parameters

- Environment-specific processes and resources

- Infrastructure mapping

Kustomize allows you to specify different values of your Kubernetes resources for different situations.

To make your YAML resources more dynamic and to apply variations between environments, you can use Kustomize.

Let's get started using Kustomize

Install Kustomize — just reading about it is not going to help us.

Here is their official documentation

However, their options did not work for the Linux installation, which I also need on WSL — this one worked:

https://weaveworks-gitops.awsworkshop.io/20_weaveworks_prerequisites/15_install_kustomize.html

Kustomize is part of kubectl so it should work without additional installation using 'kubectl -k' to specify that you want to use kustomize.

Next, scrolling through their documentation, they provide some amazing resources with examples on how to use kubectl correctly — but I am looking for kustomize example

Have a look at their guides if you are curious https://kubectl.docs.kubernetes.io/guides/

So with kustomize, we want to have our YAML tempalte and then customize the values provided to that resource manifest. However, each directory that is referenced within kustomized must have its own kustomization.yaml file.

First, let's set-up a deployment and a service, like we did in one of the previous days.

The Deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: react-application

spec:

replicas: 2

selector:

matchLabels:

run: react-application

template:

metadata:

labels:

run: react-application

spec:

containers:

- name: react-application

image: anaisurlichs/react-article-display:master

ports:

- containerPort: 80

imagePullPolicy: Always

env: